The backend for AI chat apps

Sessions, memory, context, streaming. One SDK. Focus on your product, not infrastructure.

// Initialize ChatStack in React

import { ChatStack } from '@chatstack/sdk'

const chat = new ChatStack({

apiKey: process.env.CHATSTACK_KEY,

memory: 'persistent',

})

// Create a session with context

const session = await chat.session.create({

userId: 'user_123',

context: 'premium-support',

})

// Stream a response

const stream = session.stream('Hello!')

The complete infrastructure for AI chat.

Simple for developers. Ready for production scale.

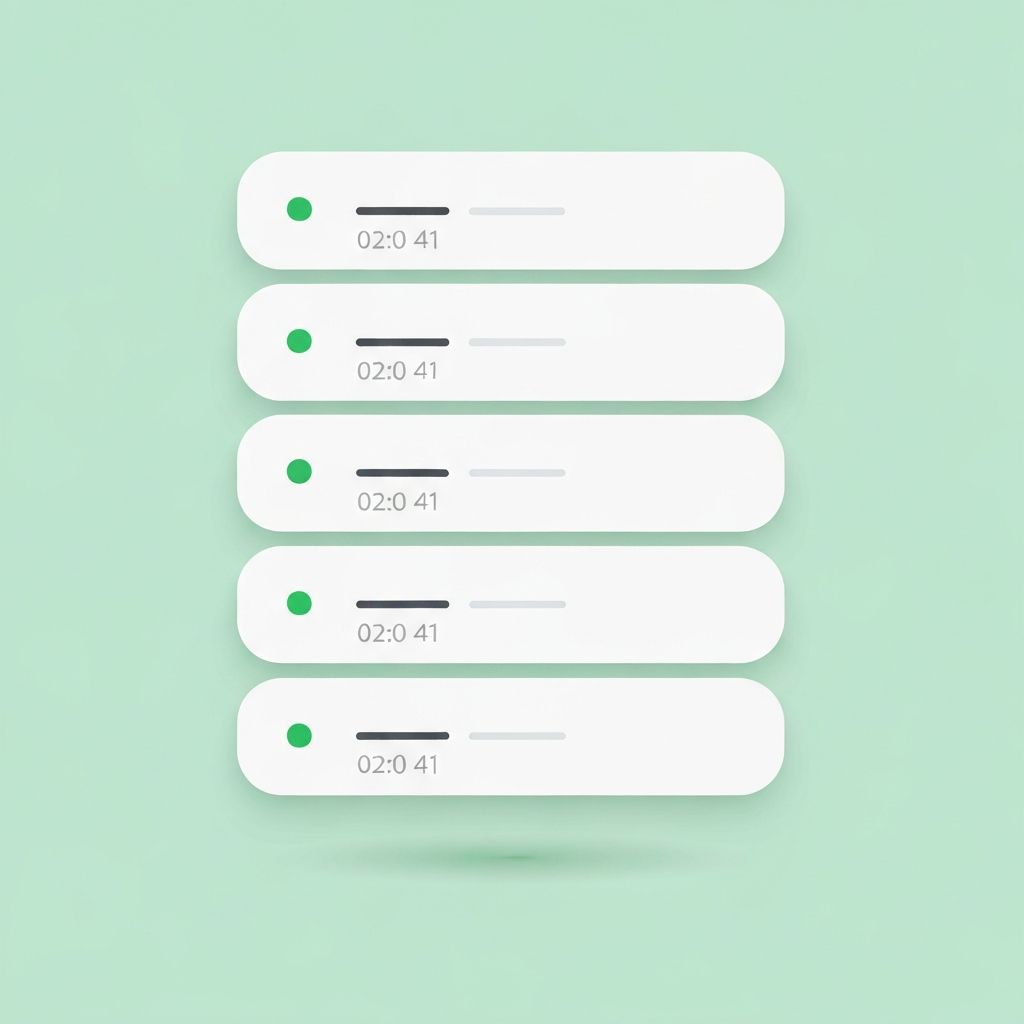

Sessions

Persistent chat sessions with built-in message history and automatic state management.

Memory

Vector-based long-term memory across conversations. Auto embeddings included.

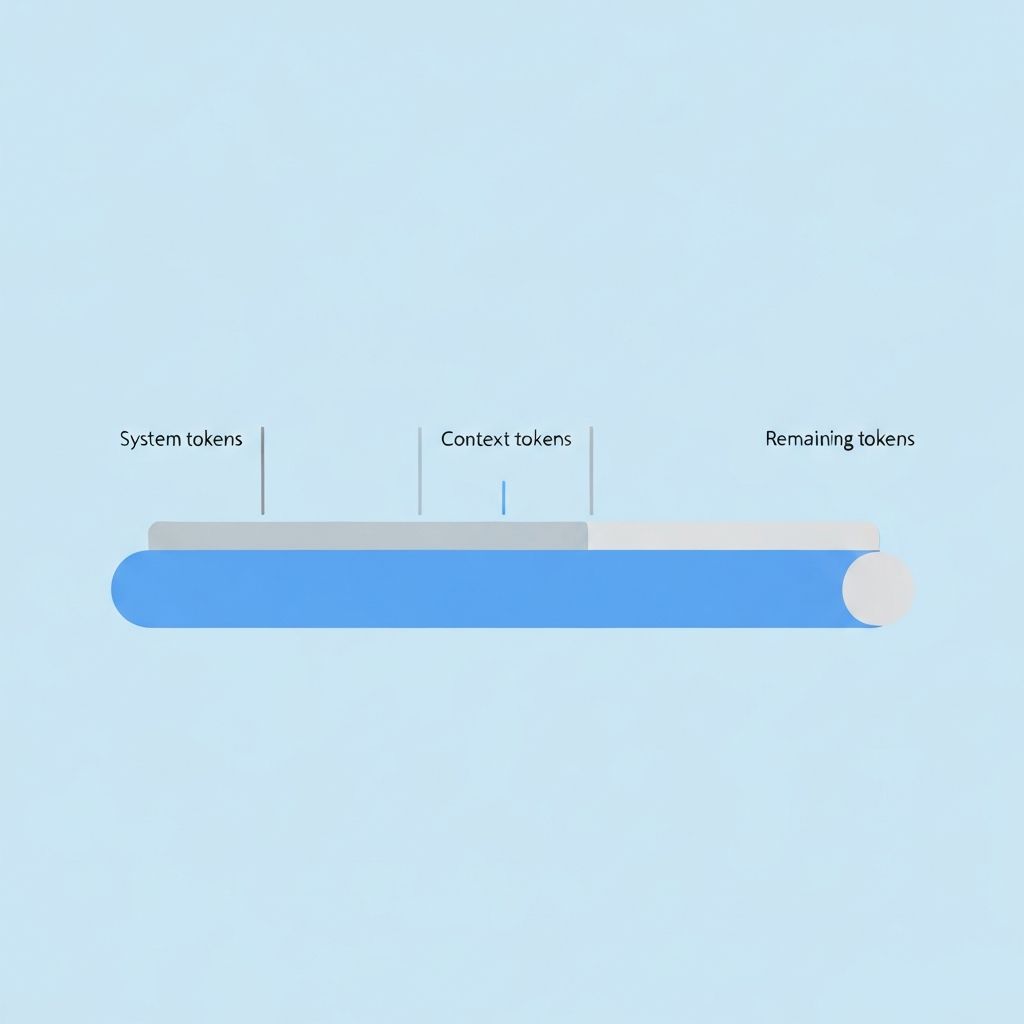

Context

Smart context windows that manage token limits and priorities automatically.

Streaming

First-class streaming with backpressure handling and graceful error recovery.

Multi-platform

SDKs for React, React Native, Flutter, and Kotlin. One API everywhere.

Token Counting

Track token usage per session, user, or API key in real time. Full analytics built in.

Rate Limiting

Per-user and per-key rate limits with sliding windows. Abuse protection out of the box.

Billing & Tiers

Manage free, pro, and enterprise tiers. Metered billing with usage caps and overage handling.

Up and running in minutes

Three steps. No infrastructure to manage.

Install the SDK

Add ChatStack to your project with a single package install. Works with any framework.

npm install @chatstack/sdkInitialize & connect

Configure your API key and set your memory and streaming preferences. One config, you're ready.

const chat = new ChatStack({ apiKey })Ship your product

Create sessions, stream responses, and let ChatStack handle the infrastructure. Focus on your UX.

const stream = session.stream(message)Start building today

Join the waitlist for early access. Be the first to ship AI chat apps with zero infrastructure headaches.

Free during early access. No credit card required.